“Nationwide on the move – at home locally.” That is the motto of DB Regio Bus, the bus division of DB Regio AG. DB Regio Bus focuses on local public transport in rural areas. With over 40 companies and subsidiaries, it is responsible for keeping bus transport running in large parts of Germany.

The challenge

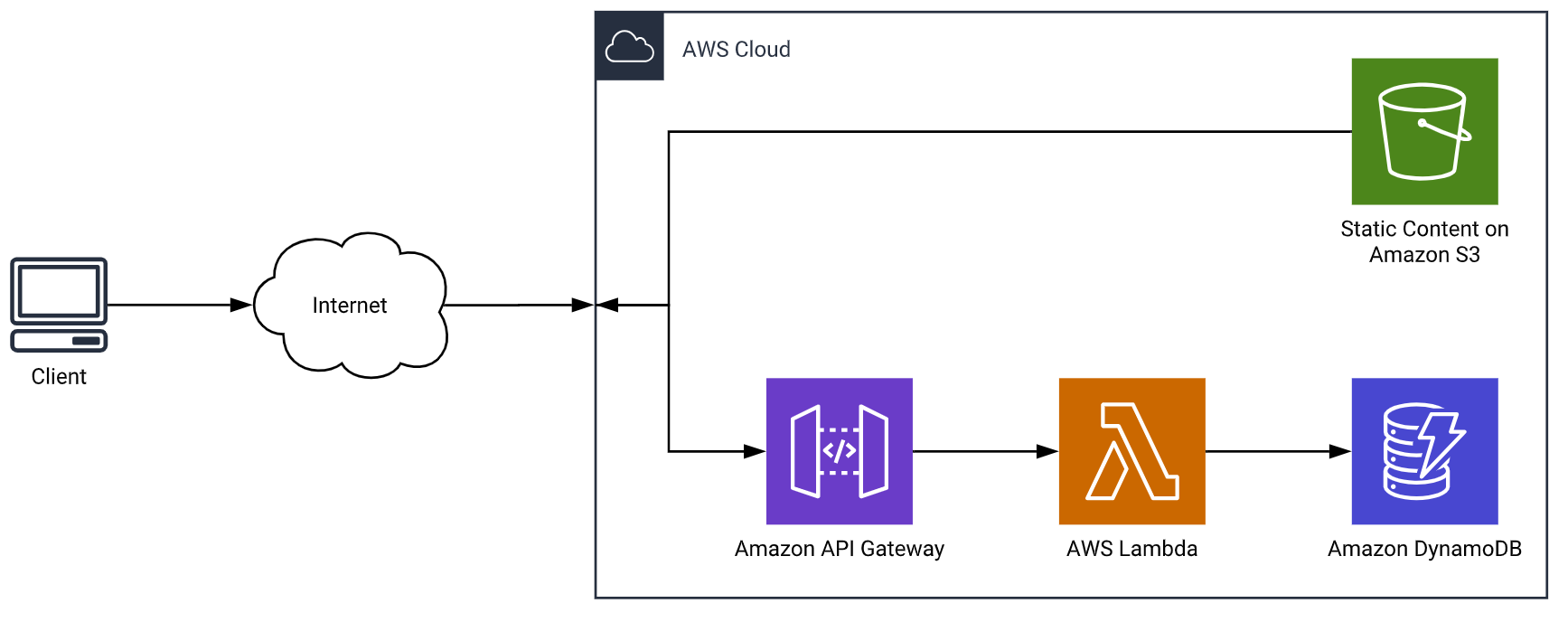

As a division of the Deutsche Bahn Group (DB), DB Regio Bus is subject to the compliance guidelines of its parent company. The compliance requirements provide clear guidelines for the management of IT infrastructure and data. Having a complete and up-to-date overview of a company's IT infrastructure is particularly important when it comes to a company with different complex business areas. A key component of the compliance guidelines is the creation of an infrastructure inventory (asset register) that clearly shows which resources DB Regio Bus manages in the AWS cloud.

Since DB Regio Bus ITK maintains its own asset register (source system), it is necessary to synchronize this register with the central register of Deutsche Bahn (target system).

Implementing this requirement necessitates the development of a new process and a new application.

The following challenges arise for implementation:

- Separation of staging and production operations

- Requirement: Implementation of a batch workload as a fully managed service to ensure minimal burden on operations teams (automated and daily execution of the workload)

- Ensuring that the application can be further developed automatically (without much effort on the part of developers)

- Continuous further development and provision of the application using CI/CD

- No permanent runtime (can be used as needed). The runtime only exists as long as there is a task to be completed

The solution

Division into test and production operation:

To ensure the simultaneous further development of the application and stable productive operation of the application, the application was divided into two identical but separate environments. While the stable version of the application is operated in the productive environment, work on optimizing the application can continue in the test environment.

Automation of Docker image builds to ECR

The implemented CI/CD pipeline based on AWS CodePipeline, CodeCommit, CodeBuild, and CodeDeploy enables fully automated deployments in both environments. Unit and integration tests of the application artifact within the CI/CD pipeline are also automated. After successful testing, a Docker image is automatically created and stored in AWS ECR.

Automatic updates of the ECS service

As soon as a new image has been created, this new version is rolled out fully automatically to AWS Elastic Container Service (AWS ECS) using CodeDeploy. AWS ECS now ensures that the latest version of the application is automatically used at the next trigger (see below).

Move to CloudWatch-triggered batch workload

The workload can be implemented as a batch workload: Data is read from the source database, converted within the application according to requirements, and transferred to the target system. Once all data records have been processed and transferred, there is no reason to continue running the application.

For this reason, AWS ECS was selected, as the service is ideally suited for batch workloads. Based on an AWS CloudWatch event trigger, the application is started every day at a predefined time. Once all data records have been processed, the application is automatically stopped and only restarted by the next trigger.

This reduces the cost of the implemented solution by approximately 10 times compared to an architecture based on AWS EC2.

The advantages

Fully automated CI/CD pipeline from software change to deployment

The CI/CD pipeline implemented with AWS Developer Tools enables fully automated application updates. This allows updates and bug fixes to be rolled out at maximum speed.

Minimization of operating costs through AWS Fully Managed Services

Using AWS ECS in Fargate mode eliminates the need for updates or patching, keeping the application's operating costs to a minimum.