The Most Critical Point: Schema Conversion

The true risk factor in a migration lies not in the data transfer itself, but in the schema.

Oracle is not PostgreSQL.

Differences exist in areas including:

- Data type definitions

- Precision and scale logic

- Default values

- Handling of NULL values

- Sequences

- PL/SQL vs. PL/pgSQL

- Trigger and constraint behavior

Particularly with numeric fields, an incorrectly transferred scale can lead to a loss of decimal places or discrepancies in calculation results. What is technically reported as a success may, from a business perspective, already be compromised.

For this reason, our migration process begins with a structured schema analysis.

We define a robust target schema and systematically examine:

- Which data types require adjustment?

- Which constraints are compatible?

- Which trigger logic needs to be refactored?

- Which stored procedures belong at the application layer?

- Which business-level validations are required?

The AWS Schema Conversion Tool (SCT) supports this process by analyzing existing structures, automatically converting compatible objects, and clearly identifying incompatible elements.

However, a tool cannot replace expert business judgment.

Automation accelerates the process—it does not absolve one of responsibility.

Migration using the AWS Database Migration Service (DMS)

The actual data migration begins only once the target schema has been defined and validated.

For this stage, we typically rely on the AWS Database Migration Service (DMS):

- Initial Full Load

- Subsequent Change Data Capture (CDC)

- Continuous synchronization between source and target

- Runtime validation

A well-designed migration model is not conceived in terms of data dumps and overnight maintenance windows.

Instead, it is built around controlled transitions.

Traditional "big-bang" migrations often entail months of preparation, data freezes, tightly scheduled cutover windows, and a heavy reliance on weekends. Go/No-Go decisions are frequently made under intense time pressure. Fallback scenarios must be prepared in advance. By Monday morning, everything must be fully operational.

This model generates pressure—both technically and organizationally. We have all experienced firsthand that pressure tends to lead to errors rather than to clean, sustainable results.

Through the structured deployment of a DMS and the continuous synchronization of data changes, this risk is mitigated. Data is synchronized continuously, validations can be performed in parallel, and the final cutover point is narrowed down to a clearly defined time window.

In this process, we specifically monitor and manage replication lag, transaction behavior, and load profiles. Particularly in high-transaction systems or large Line-of-Business (LOB) environments, a finely tuned performance and monitoring strategy is crucial to ensure consistency and stability during the synchronization phase.

As a result, migration becomes a predictable process—rather than a nerve-wracking ordeal.

To me, a successful migration is not a Herculean effort squeezed into a long weekend, but rather a clean, streamlined process. When data is migrated continuously, scalability remains flexible, and security is factored in from the very start, migration transforms into genuine evolution.

Handling LOBs and Large Data Volumes

Large Binary Objects (LOBs) present a particular challenge. Documents, images, or videos have different storage and access requirements than relational datasets.

If transferred without careful consideration, this can lead to performance issues, unnecessary storage costs, or extended migration windows.

In such cases, we specifically evaluate whether structured data and large objects should be handled separately—for instance, by moving LOBs to an object store like Amazon S3 while migrating relational data to Aurora PostgreSQL.

This decision is not an architectural dogma, but rather a matter of meeting specific business and operational requirements.

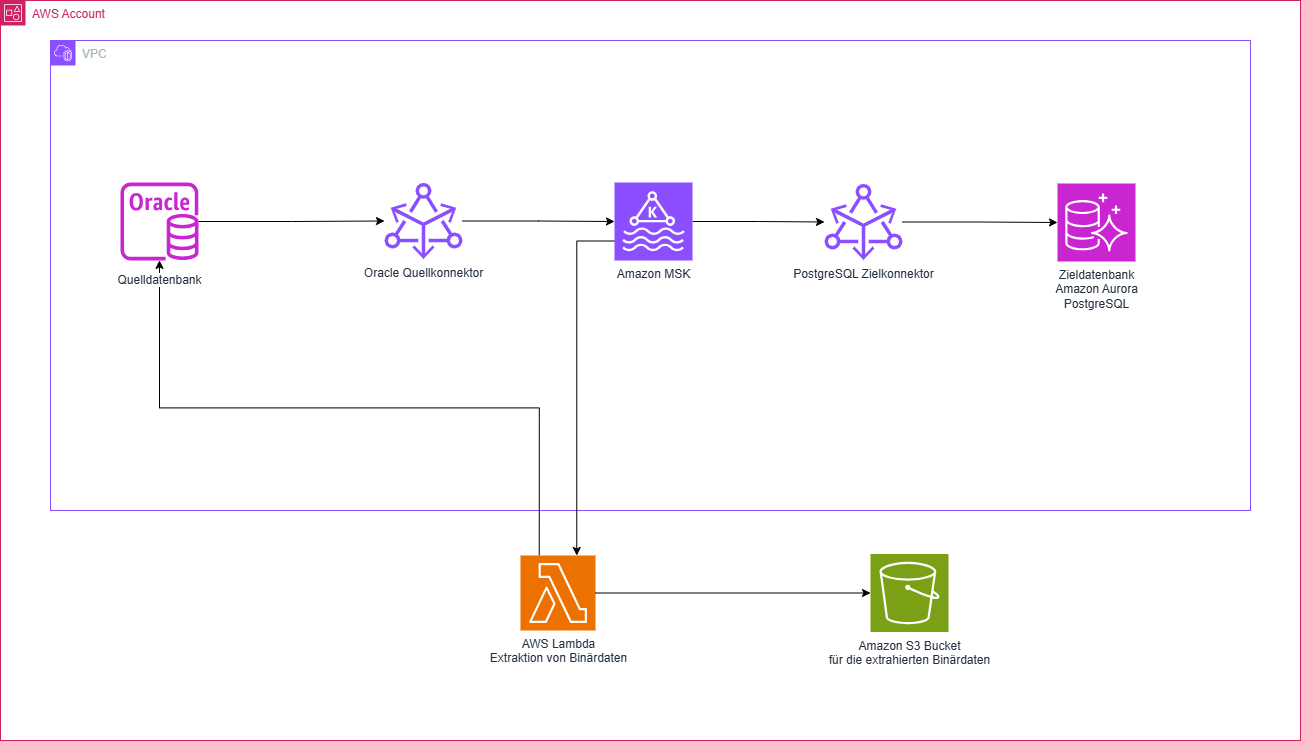

Streaming as a Complementary Option

In certain project scenarios, a traditional DMS approach is insufficient.

When:

- extremely large data volumes must be processed;

- downtime is effectively ruled out; and

- event-based downstream processing makes strategic sense;

then a streaming architecture can serve as a valuable complement to the migration.

One possible approach involves:

- Change Data Capture from Oracle;

- Processing via a Kafka-based platform, e.g., Amazon MSK;

- Utilizing Debezium to capture data changes;

- Separate extraction of large binary data via AWS Lambda;

- Storing relational data in Aurora PostgreSQL; and

- Storing LOBs in Amazon S3.

Streaming thus expands the range of potential solutions. It provides visibility into data changes and enables decoupled, scalable processing. Crucially, we view streaming as an option—a means to an end—rather than an end in itself.

Refactoring as Part of the Migration

Migration entails more than just data transfer.

It also involves technical cleanup.

In mature Oracle systems, business logic is often deeply embedded within stored procedures, triggers, or database-side functions. A direct "lift-and-shift" approach would merely transplant these dependencies into a new environment.

Therefore, we specifically evaluate:

- Which logic should remain within the database?

- Which functionality should be shifted into application services?

- Which transaction patterns need to be adapted?

- Which implicit assumptions are hidden within the system?

In doing so, we pay particular attention to transaction boundaries, locking behavior, and consistency requirements. When logic is extracted from the database, we must ensure that functional equivalence, performance, and data integrity are preserved—even within a distributed model.

Refactoring is, therefore, not merely an additional step, but an integral part of functional validation.

It ensures that the new environment is not only technically compatible but also structurally sound.

What Matters to Us as a Team

As consultants and developers at PROTOS, we are drawn to projects like these for reasons that go beyond just the technological shift. What is crucial is transforming an organically evolved system into a platform that is controllable, comprehensible, and sustainable in the long term.

Architecture must remain explainable. Components must not be black boxes. Data flows must be transparent—both technically and functionally.

We consistently define infrastructure as code, integrate security into the overall concept from day one, and establish monitoring as a core component of the platform.

The result is not merely a migration, but an environment that is easy to understand, operate, and evolve.

What Changes in Day-to-Day Operations

After the migration, you gain more than just a new database.

Migrations proceed in a controlled and reproducible manner.

Data remains consistent.

Errors are visible.

Scaling is no longer a project; it becomes a matter of configuration.

Developers can integrate new services without jeopardizing existing systems.

DevOps teams operate an architecture they fully master.

And management gains greater planning certainty.

Conclusion

A database migration is successful only when it delivers more than just a change of systems. It must be functionally accurate, make risks transparent, and provide lasting relief for operational workflows. Through a structured schema analysis, the targeted application of AWS SCT and DMS, and—where appropriate—supplementary streaming architectures, the process becomes not a technical Herculean task, but a controlled transition.

For our clients, this translates into planning certainty, technical clarity, and the freedom to implement new digital initiatives upon a stable foundation.

This is what we at PROTOS stand for.